Agentic Geometry

BIM and the current limitations for agents

a16z’s team put out a fantastic piece this week on the challenge with Revit and BIM for the built world.

Funny enough, this has been a major focus for me lately, so I thought I’d lay out why the BIM opportunity matters and give a taste of a few things I’ve been working on.

There are essentially two ways to think about BIM:

First, in order to understand BIM, you need to understand the utility of BIM in the built world. BIM = Building Information Modeling:

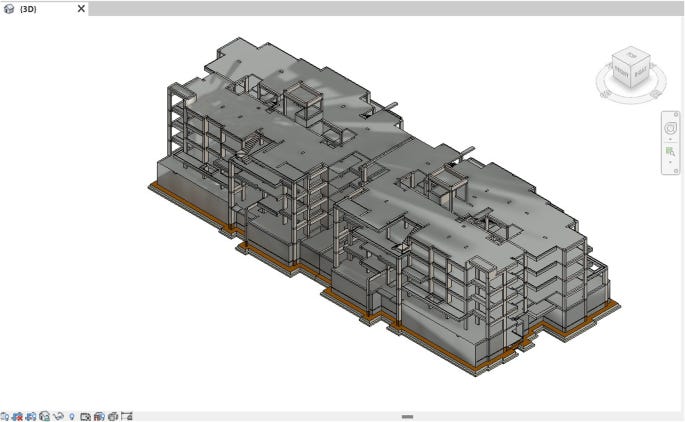

Many teams and personas collaborate on a BIM model. An architect will translate design intent into the building shell and floor layouts, exteriors, and interiors; engineers will plan MEP routing and structural integrity; contractors, schedulers, and more will then use this to plan and subsequently build the building.

BIM is useful. Construction firms are among the most operationally competent organizations in the world, and major projects are often planned and executed with extraordinary rigor. Yet, there’s a fundamental problem with BIM as a discipline:1

It doesn’t move at the speed of agents. We will get to why in a second, but let’s first talk about what this practically means:

First, let’s assume that we are still in the precon/design phase of a project. And a structural engineer upon reviewing the current model indicates to the architect that they need to increase the slab by an inch and a half.

What happens next is that an architect has to revise the model, adjust the slab, extend the walls, and then verify that the downstream drawings still reconcile.

For a seemingly minor update on a building (and this isn’t an overly complex building), an architect can look to spend upwards of 6 hours adjusting the model.

Are there pathways out? Sure. These usually involve scripting in Revit (which Claude Code and others have made easier). To one of a16z’s point, perhaps this represents a pathway to better AI outcomes in AEC with BIM.

However, in my view, this is simply not sufficient. But to get there, perhaps we should first consider the alternative. What do we want agents to be able to do in BIM? Is this about mere time savings or something else altogether?

For a second, let’s assume that we have truly agentic BIM - eg agents actually build and maintain models. What are the second order consequences?

Faster modeling means more models.

This one is easy. If the cost to build full fledged models drops significantly, the number of models proportionally grows. Architects and engineers now propose dozens of design permutations at the precon stage with the capability to turn these into fully engineered models within a matter of hours.

Cost and review time go down with impacts on total cost and project speed.

Construction often orbits around constructability reviews, clash detections, and plenty more to ensure that when a project moves from the design phase to the build phase, the project is actually constructable.

RFIS and other construction processes change

ZeroRFI recently launched as an owner’s representation firm rollup with the goal of enabling “zero RFIs” - eg requests for information. RFIs usually occur when plans/drawings aren’t sufficient to gauge design and build intent for a contractor implementing an aspect of the project. BIMs nominally were supposed to address this by having large amounts of metadata around materials, scheduling, costs, and more. There are now more RFIs than ever.

Solve BIM in an agentic native way and guess what? RFIs don’t go to zero, they simply become questions run against the agentic BIM model.

BIM is upstream of all of this and these are all real time and money costs on a construction project. But they aren’t even the full benefit here.

Let’s keep going around even more speculative (but fully realistic) impacts.

Scheduling:

Currently a construction project is scheduled via master schedulers and project controls teams. These teams are essentially oracles that can synthesize the entire project progression, resource constraints, and time constraints and get buildings built on time.

There’s been movement towards 4-D BIM scheduling, where a firm takes the schedule and the BIM itself and shows the building itself going up over time in accordance with the schedule. This is currently highly expensive, but highly useful for projects where it may not be obvious from glancing at a model and a schedule if crews will be overlapping work areas leading to slowdown.

What happens when this becomes cheap and agentic? Well it’s obvious that this would completely change scheduling and project controls, but that’s the least of it. In 20 years, these time and geospatial dimensions are the core aspects of how a construction team will manage fleets of robots to deploy into a build. Everything is downstream of this.

And yet, BIM remains a time intensive asset that today has not been amenable to these techniques. Why is that? Let me give you my thoughts, often synthesized from others.

Agentic BIM is a kernel problem

Here’s a fun experiment: what happens if you give an LLM a Revit .rfa file and ask it to perform operations, perhaps lengthen a component. This seems reasonable right?

Agents fail almost completely. It’s essentially hopeless. That alone is not a sufficient analysis. Why do they fail so completely? Is geometry simply too complex for the current agent generation? Not really. There’s more going on here.

First, Revit doesn’t natively support interoperability. And in fact, geometry kernels writ large are not very interoperable. The industry attempt to standardize BIM files, IFC, has been useful but by and large there are conversion issues moving files between different BIM systems. This is primarily due to to how legacy geometry kernels represent geometry.

Revit and other CAD/CAM sofware works by modeling b-reps, boundary-representations. In other words, they model the edges of a surface, plane, or vector and then their kernel implementations subsequently extrapolate the shapes to be built from the boundary-representations. Suffice to say, the specific implementation from one b-rep based system to another can be incredibly lossy. As a result, there’s almost zero training value for models to give them b-rep based files and ask them to, they mostly aren’t learning geometry or geo-spatial awareness, but instead something to the effect of “how one BIM provider would implement geometry”.

The best writer and practitioner on this is Blake Courter:

Above, we assumed that the AI could somehow make sense of the CAD geometry. It turns out, that’s also a hard problem.

Let’s say we want to represent a closed shape like a circle. In traditional CAD, we’d model it as a loop that joins the start and the end of a curve or list of chained curves. There’s going to be at least one point where there’s a “parametric seam.”

Now, we might want to know whether a given point is inside or outside of our shape, which is the most basic test in representing a “solid model”. For example, a 3D printer needs to put material where the shape is and other stuff where it is not. How can we reason about this problem? We need an algorithm!

One algorithm might be to pretend that the point is like a cow in a fenced-in pasture and to test if it can escape. We could shoot a ray from the point off to infinity in one direction. If we cross the boundary of the shape an odd number of times, we must be inside the fence, and if an even number, outside.

But does the algorithm always work? What if one of the rays is just tangent to the shape? What if we make a mistake around the parametric seam? Apparently arbitrary cases cause us to get the answer wrong. And indeed, most traditional CAD kernels answer this kind of question incorrectly often enough to cause issues that require the attention of CAD specialists.

All this arbitrariness becomes an impediment to training AI models on CAD data. Not only does the AI need to understand the design, but also, to do anything more than produce output, it needs to understand how the geometry engine will manipulate the geometry, blowing up the latent space.

The entire article is worth a read, but note here the challenge, which isn’t solely unique to agents: boundary-representations of an object are simply not sufficient for an agent, often an algorithm, or even the current kernels themselves, to sufficiently gain perspective on the actual geometry of an object.2

Multiply this frustration by tens of thousands of objects inside a building file, and we can start to grok the magnitude of the problem with b-rep based systems for representing geo-spatial data across construction and engineering.

Suffice to say, it turns out the problem isn’t just “train agents on existing BIM/CAD data”, the problem is largely “we need a different approach altogether to train agents and ultimately enable the creation of high fidelity geometric assets and geospatial awareness in LLMs.”

But note here, the payoff. This problem currently occurs across every single engineering and construction workflow.

So what is the better path, if one exists at all?

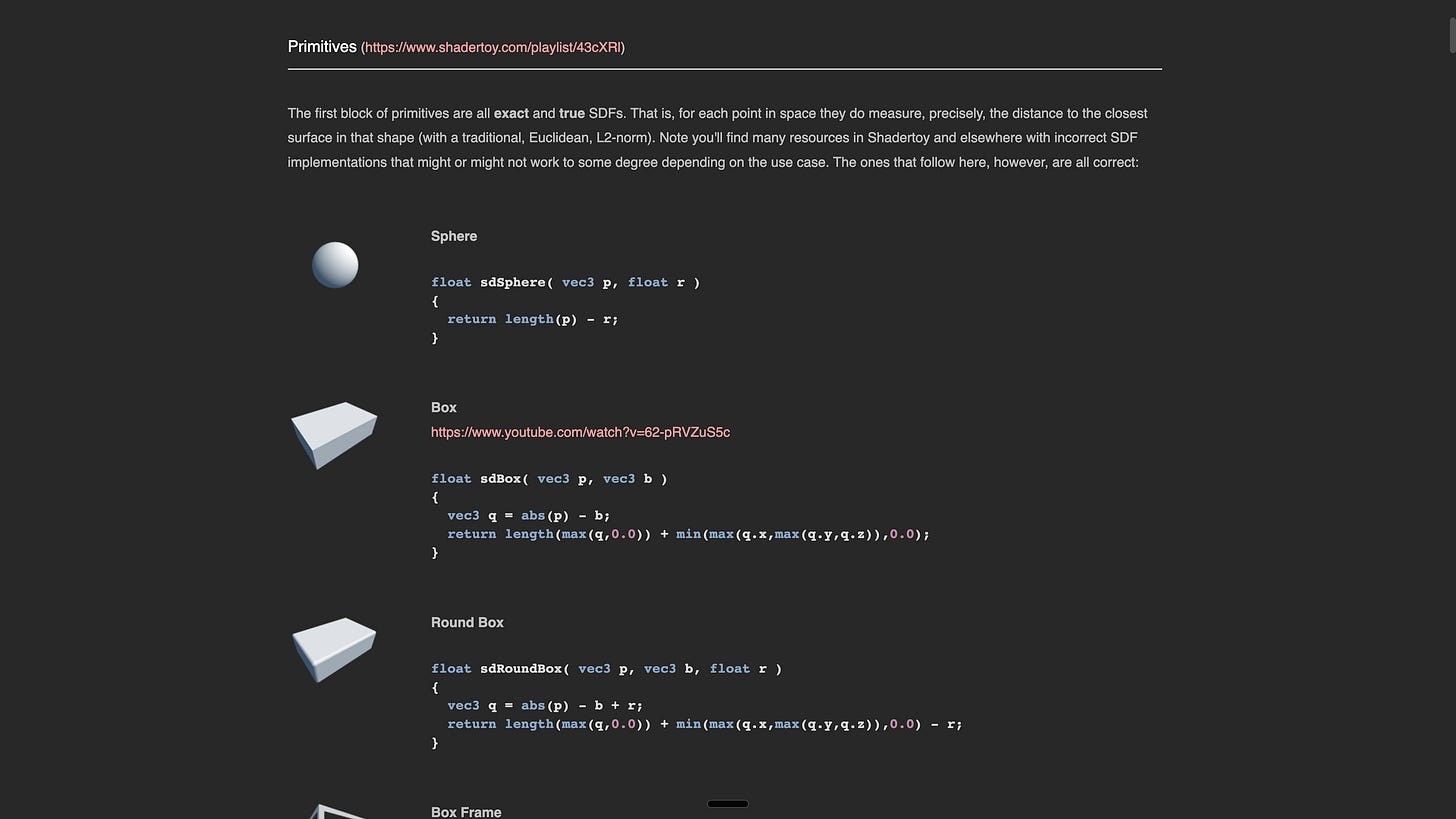

Well, Courter and others have outlined a potential remedy here too: implicit geometry.

Implicit Geometry

If we were to ask ourselves, how would we build better geometry kernels amenable to a) ultimately better models, b) and that are useful for agents as training data and for application, a couple core observations occur:

We need to treat geometry as code (I’m blatantly stealing this from Courter by the way, all credit to him).

Practically this means that we know always and without a doubt if the geometry is going to compile. We aren’t reliant on understanding the tens of millions of custom code that geometry kernels have implemented to handle edge case scenarios around b-rep.

LLMs can reason over parametric relationships and augmenting one variable (lengthening a slab) instantly adjust the rest of the model as well.

We likely want the geometric representation to be primarily math based. Agents are increasingly great at math and code.

Implicit geometry fulfills both of these conditions. In the interest of brevity, for our purposes, any object can be represented as a mathematical function. Operations on these function can then be performed via code.

Why hasn’t this already been done?

There was never a fully compelling reason to move geometry off of b-rep kernels prior to the advance of agents. If humans would still mostly be manipulating a UI in a b-rep vs. f-rep based kernel, there wasn’t a compelling reason to move in this direction.

There are some true problems around meshing objects. As Matt Keeter puts it, “good meshing of arbitrary implicit surfaces remains an unsolved problem!”3

But in my view, the time is now to fully figure these problems out. First, while engineering CAD vs. BIM needs are different, the EV from these in the industries themselves are huge. There’s also a colorable argument that simulations of worlds will be built via new f-rep based kernels.

Eg, if implicit geometry and subsequent training on these data sets yields agents that can perform geospatial operations at token speed, the ramifications may be far larger than simply BIM and CAD.

Gaming engines: to what extent does implicit geometry enable faster iteration and development of gaming assets and games.

Robotics: Does the sim2real problem get solved via enabling mass simulation of real world environments that can be scaffolded in mere minutes and then RL’d against? I don’t know the answer, but in my view the progress that Physical Intelligence and General Intuition are making raise this question.4

And in short that may be right expected payoff. In some sense, agents that can represent the physical world geospatially can train against the same world5

And in the future, it’s not hard to imagine agents in the cloud coordinating with robots on the ground to plan the work to be accomplished, all with the same BIM model.

Anyways, if you want to chat around these ideas, as always please reach out.

I’m going to go ahead and ignore the sociotechnical problems in BIM in this piece, but please note that there are other issues that are not reducible to “agents can’t write BIM”. My main contention however is that if agents can write BIM, a lot of these sociotechnical problems will get solved fairly quickly.

The world model research here is interesting in my view. But I’ll leave it aside for now.

Yes, this is reductionistic.

“Bullshit information modeling” as a customer once described it. Lots of human tension across the value chain while using BIM. It’s everywhere, yet no one really likes it. Maybe this version would actually “work”?

This is a great read.

Recently, I moved (2) walls in a large commercial project's Revit model 1.5" each.

This change to the BIM required revisions on (7) Architectural and (3) Structural drawing sheets, and (6) business days elapsed before I sent our revised drawings to contractor.

Cumbersome.